For the last several years, Antmicro has been helping customers develop DC-SCM-compatible Baseboard Management Controller (BMC) platforms, including ones based on SoCs and FPGAs, as well as complete systems and modular technology demonstrators.

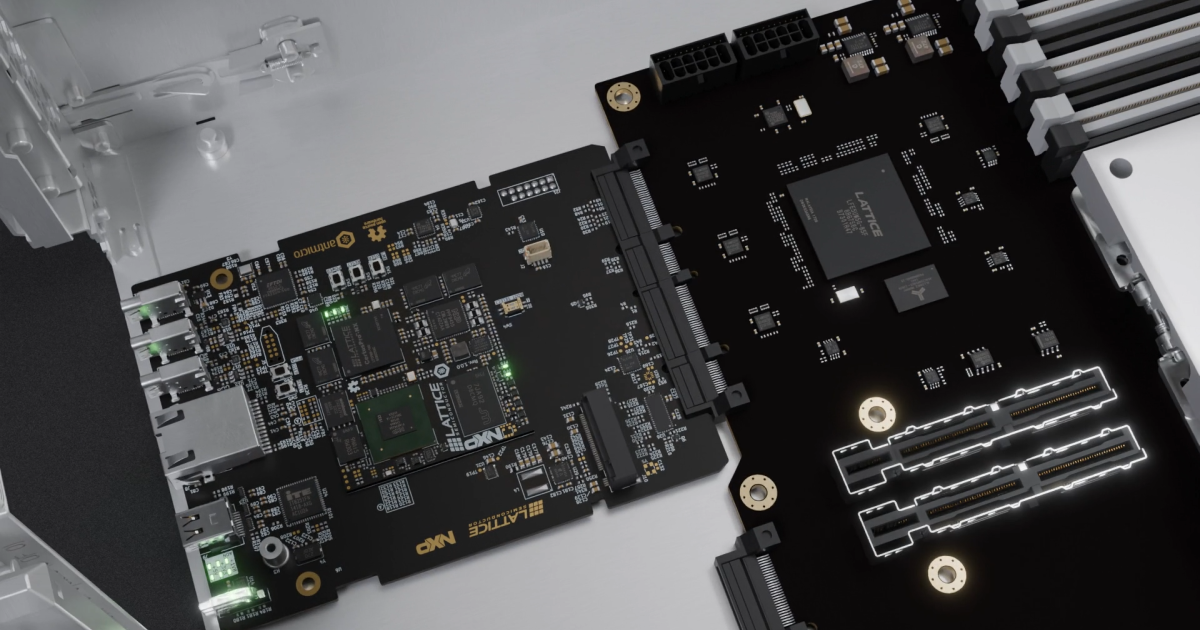

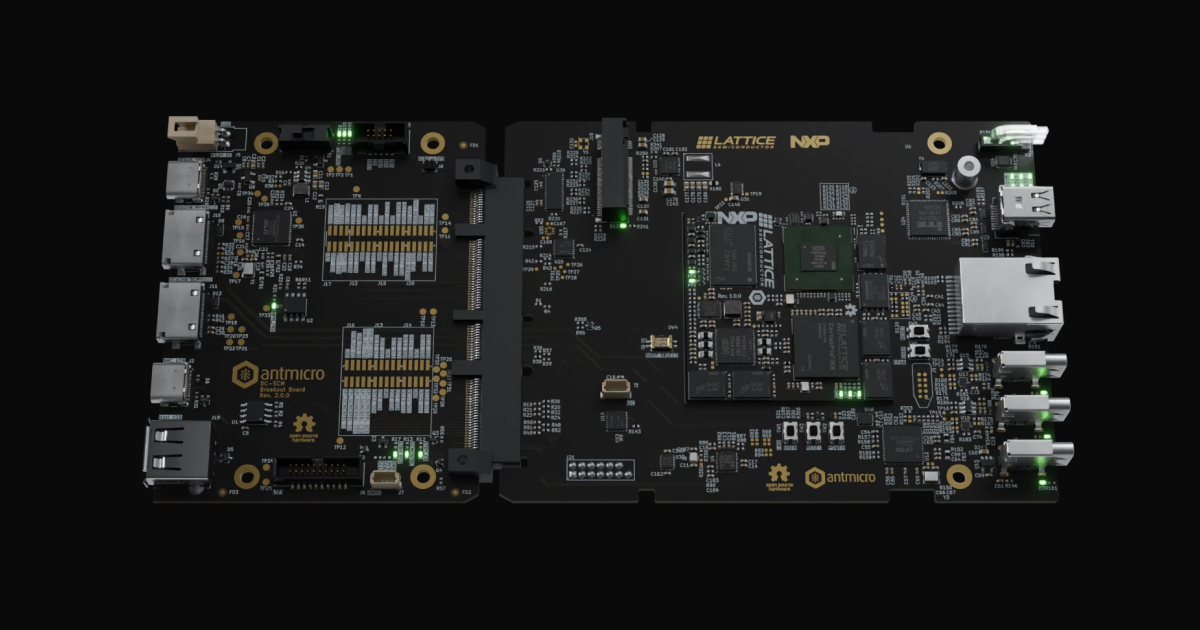

Last year, working with our partners Lattice and NXP, we introduced the open source DC-SCM 2.1 reference design featuring a Lattice Certus Pro-NX FPGA and a multi-core i.MX943 SoC from NXP. This setup, consisting of a System on Module following the OSM-L standard and a complementary DC-SCM Carrier Card, constitutes a versatile development platform: standardized and open source, it can be used both as-is and as a base for derivative solutions Antmicro can help build.

Following up on the article we published before OCP Global Summit 2025, this year, coinciding with Antmicro’s presence with CHIPS Alliance at OCP EMEA Summit in Barcelona, we expand on the topics related to the DC-SCM platform itself, discuss an FPGA-driven LTPI interface for hardware abstraction layers, and describe the related tools and solutions developed along the way.

Leveraging DC-SCM’s flexibility: an FPGA-driven LTPI interface for hardware abstraction layers

Since the introduction of the reference design last year, we have received many inquiries about the possibilities offered by our DC-SCM hardware and just how far it can be customized. With customers frequently seeking to adjust or adapt both the DC-SCM Carrier Board and the OSM-L SoM to make the system work with a custom server platform, we think it useful to dive deeper into the central idea that allows for this flexibility by design: introducing a hardware abstraction layer for mapping control interfaces via LTPI tunneling.

Our open hardware DC-SCM and OSM-L reference designs follow the DC-SCI connector pinout that was introduced by the Open Compute Project in the DC-SCM 2.0 Specification. The key change introduced with that revision is the unified LVDS Tunneling Protocol and Interface (LTPI) that enables communication between a DC-SCM (Data Center Secure Control Module) and an HPM (Host Processor Module) by passing multiple low-speed signals and communication buses (such as I2C, GPIO or UART) over one standardized interface.

In the solution developed with NXP and Lattice, the LTPI protocol can be implemented between two FPGAs, one located on the DC-SCM and the other in the HPM. The physical layer of the LTPI link consists of four differential pairs which provide full-duplex transfer. There is one data lane for transmission and another for reception. The data lanes are synchronized with separate clock lines. The clock speed is negotiated during the LTPI initialization process so the LTPI communication tunnel can be established between devices offering different performance levels.

This approach reduces the number of control signals and interfaces that are routed between the HPM and the matching DC-SCM card, while differential signaling makes the communication less susceptible to potential electromagnetic interference.

In the proposed architecture, routing all control signals through a pair of FPGAs connected over LTPI introduces a hardware abstraction layer. In principle, the RTL designs for both FPGAs can implement signal routing and introduce some basic control logic so that various server configurations can be supported by simply switching the FPGA payloads.

We have illustrated the LTPI concept with our System Designer entry showcasing the OCP 2025 demonstration setup. This physical setup was based on an OCP-compliant Intel Lincoln City HPM where we had implemented temperature measurement and controlled the chassis-mounted cooling fans from an OpenBMC instance running on the DC-SCM. Both the temperature sensors and FAN PWM drivers were accessible to the DC-SCM through I2C buses tunneled via LTPI. The setup is visualized in the Fan control demo section in our System Designer.

In order to take control over the FAN PWM driver and temperature sensors on-board the reference HPM, we modified the reference LTPI design and based on that, created two bitstreams: one for the MachXO3D FPGA located on the HPM and the other for the CertusPro-NX FPGA located on the SCM. Both FPGAs were then able to communicate using LTPI version 1.4.3 that is compliant with the DC-SCM Protocol Specification 2.0. For the purpose of the demo, we tunneled only the basic interfaces, I2C and GPIO, and used an OpenBMC distribution that comes with pre-installed I2C manipulation programs. Using the tunneled I2C, we were able to control the speed of the fans connected to the Lincoln City Server and Fan Control Board and read the temperature sensors located on the HPM CPU inlet/outlet. The temperature readouts were showcased with a WebUI interface served by the DC-SCM card over a local network.

DC-SCM breakout board rev. 2.0

In order to streamline the development and integration of custom DC-SCM cards and OpenBMC software, a few years back we developed an open hardware DC-SCM breakout board. We have now revised this design and released it as revision 2.0, which is compatible with rev.2.x of the DC-SCM standard, so including our DC-SCM Carrier Card for OSM-L SoMs.

Antmicro’s DC-SCM breakout board enables easy software development, debugging and validation of DC-SCM platforms, letting you test it thoroughly in a lab environment prior to installation next to an HPM. It exposes DC-SCI interfaces such as LTPI, PCIe, USB, UART, SPI, QSPI and I2C, all in a 90 x 70 mm (3.54 x 2.76”) PCB outline. It also provides the DC-SCM Carrier Card with power and enables access to the UART interface exposed on the DC-SCI connector which is usually used for debug console output.

The revised variant of the DC-SCM breakout board can be easily adjusted or expanded to support further integration with complex devices and systems that rely on DC-SCM control and commissioning. A custom variant of the DC-SCM breakout board could, for example, mimic relevant functionalities of the target HPM, rack management system and so on.

Support for FRU definitions in System Designer

Antmicro has been systematically building out a collection of open source tools, workflows and design methodologies that enable rapid prototyping, support design and integration of complex multi-node systems, and streamline knowledge management. This let us and our customers pursue vertical integration of the entire technology stack and a single source of truth shared between hardware, software, mechanical design and other teams.

These open tools and workflows come together in Antmicro’s System Designer portal which focuses around a vast library of hardware components and reference designs (like the DC-SCM reference) on the one hand and the ability to describe and track completely custom projects on the other. At the core of the System Designer portal is the Visual System Designer (VSD) tool facilitating creation of multi-node diagrams. VSD provides an efficient method for designing and analyzing complex hierarchical devices and installations such as those that can be found in modern server rooms. It can help you visualize the servers themselves, combined with power distribution units, coolant distribution units and rack management.

HPM architectures and components can be described using an DC-MHS PnP FRU (Data Center Modular Hardware System Plug-and-Play Field Replaceable Unit) definition which is a standard driven by the Open Compute Project in order to simplify hardware discovery, integration and producing auto-generated documentation for the modern data center facility.

The FRU definition allows deciphering the internal structure of the server, and drives the (additional) discovery stage in the server boot process where the FPGA on the DC-SCM reads the FRU JSON located on the FRU I2C EEPROM on the HPM board and dynamically reconfigures the local FPGA to match the LTPI control signal mapping and general HPM requirements before the HPM booting process starts.

To take full advantage of the availability of FRU-based server descriptions, we have recently developed a Python script called Fru2Graph that enables converting FRU JSON files into specification and data flow files that can be then loaded into System Designer’s online editor or deployed locally using the underlying Pipeline Manager tool. The resulting diagram provides an interactive visualization of the data flow within the system, with all the interfaces and connections between them. An example of a complex multi-layer device structure derived from the DC-MHS definition is shown below. In particular, you can navigate through sub-graphs and switch the connection maps with respect to specific interfaces available on modern HPMs such as I2C, I3C, PCIe, USB and many more.

For an interactive version of the diagram, visit the desktop version of the website

For an interactive version of the diagram, visit the desktop version of the website Secure, scalable and customizable data center solutions with Antmicro

By combining our open hardware designs with the System Designer methodology backed with practical helper tools such as our Fru2Graph, Antmicro assists customers in developing secure, configurable and flexible server management and monitoring infrastructure. Our open hardware platforms, including the DC-SCM Carrier Board and OSM-L SoM, are fully customizable in terms of on-board interfaces, component layout and PCB outline.

Antmicro’s wide range of engineering services for developing data center solutions includes designing custom hardware (from single baseboards to entire clusters), integrating security measures such as Root of Trust, and comprehensive testing using a broad portfolio of open source tools such as the Rowhammer memory testing suite, the Renode simulation framework, and Protoplaster. If you would like to learn more, don’t hesitate to contact us at contact@antmicro.com.