Working with hundreds of open source projects, Antmicro finds itself running countless CI systems, many of which are public-facing CIs using GitHub Actions. For some large-scale CI projects which require significant processing resources, we have been developing custom open source software and hardware for CI runners, and using them with GH Actions described in previous blog notes. Over the years that we have been using them, in collaboration with Google and to improve integration with Google Cloud Platform, we’ve implemented some new features that we’d like to talk about in the blog note today.

Challenges with using standard runners

Many open source projects are hosted on GitHub and use the default, GitHub-hosted CI with free runners. GitHub offers machines with the following parameters:

- 2-core CPU,

- 7 GB of RAM memory,

- 14 GB of SSD disk space,

- 6h max job run,

- a limited number of jobs running at once inside a single organization.

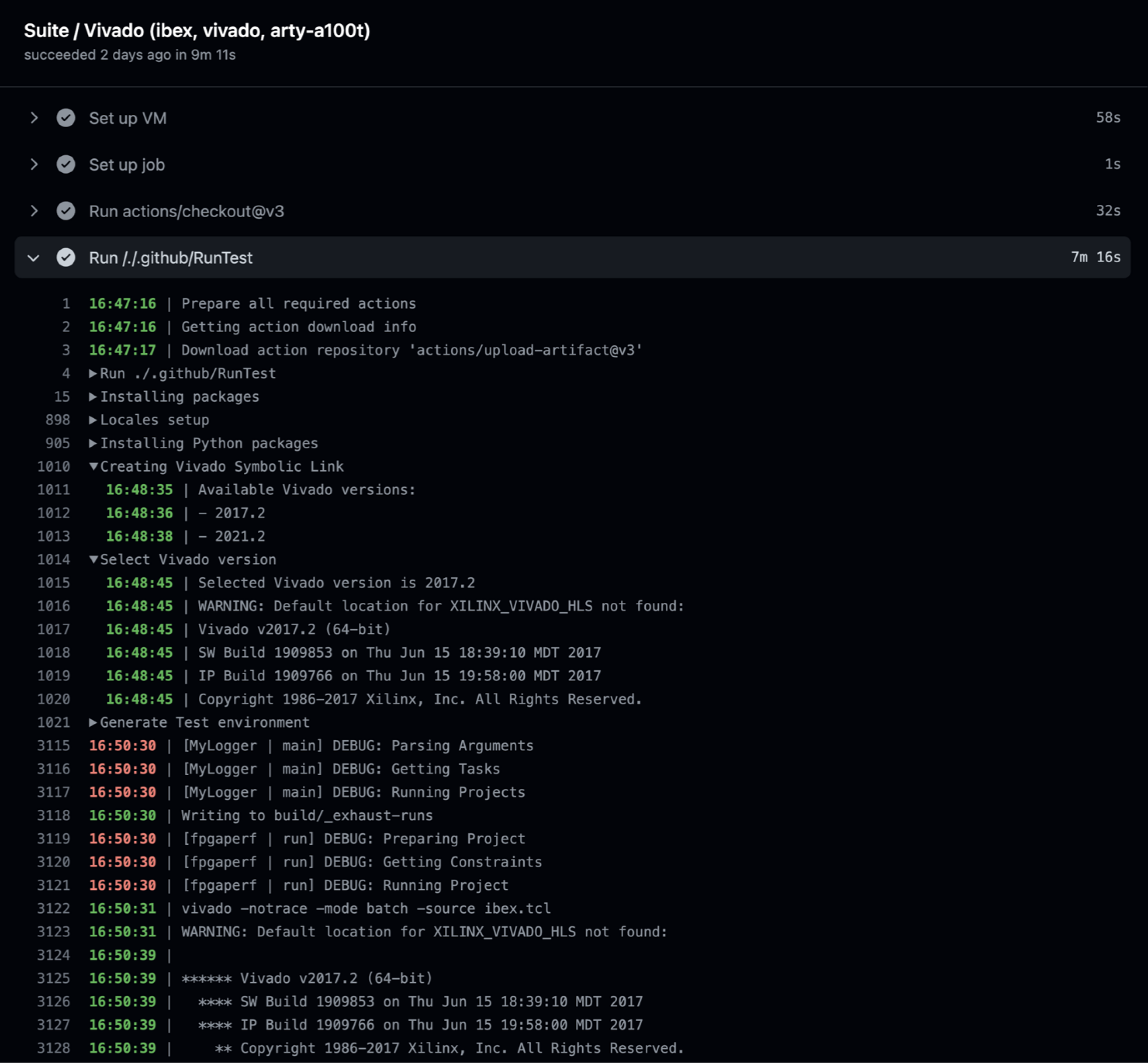

While the standard runners are great for testing small projects, the complexity of our use cases often exceeds their capabilities, either in terms of compute power or storage. Another problem is that some projects, e.g. ASIC design and verification or FPGA development and testing, often require proprietary tools. Antmicro’s custom runners allow us to solve these problems, providing a solution that is both open source and scalable.

Basing on the flexibility of GCP

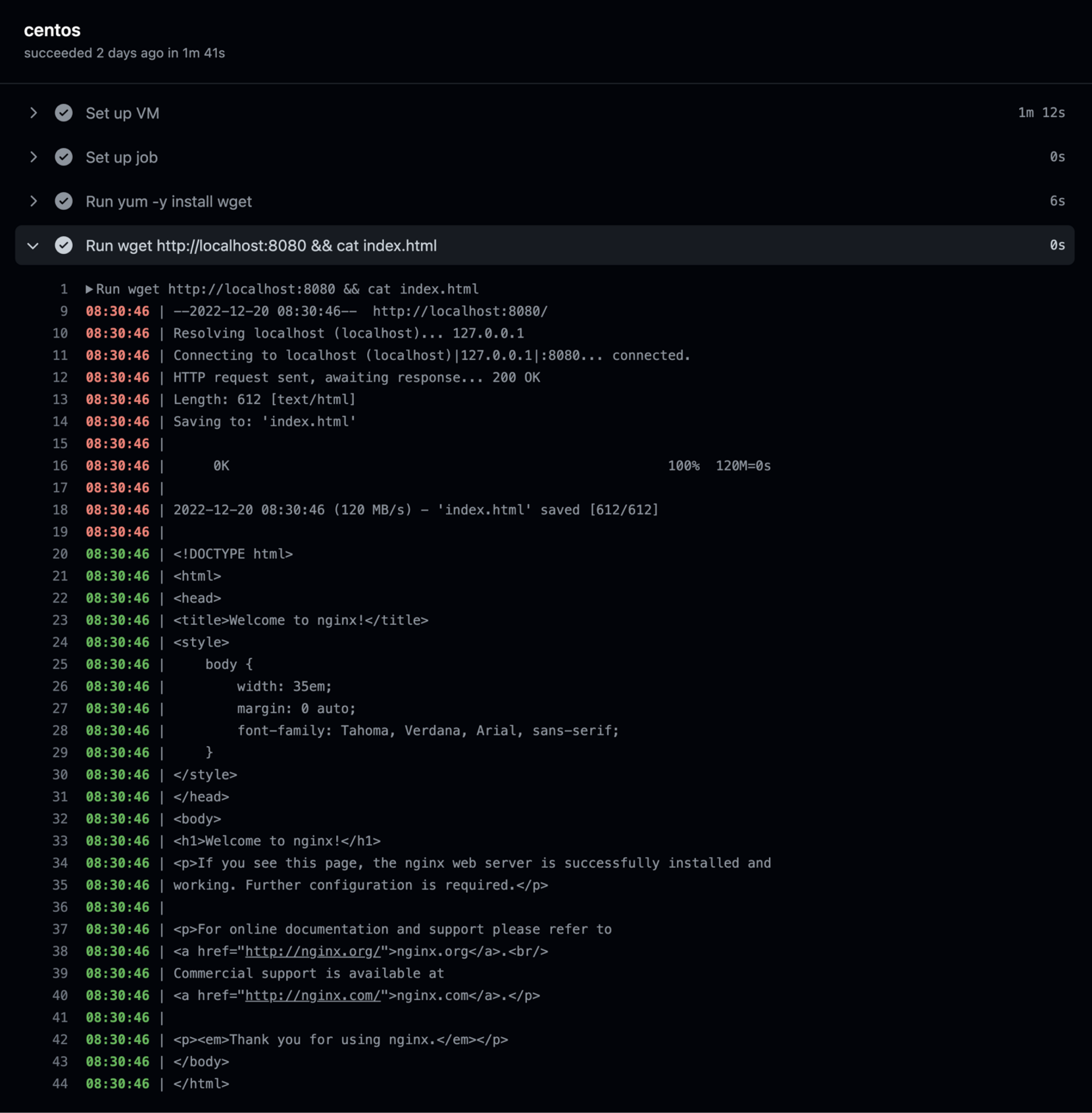

To enable infinite scalability of the CIs we implement, we combine internal compute resources with the capabilities of Google Cloud Platform. GCP allows us to spawn machines on demand and fully control parameters of all those machines, e.g. by adding more RAM or CPU cores, or running as many parallel jobs as we see fit. This allows us to massively speed up testing and building which is key to iterative, rapid development. Great examples of such parallelization are some of the projects that Antmicro is running together with Google under the auspices of CHIPS Alliance:

- the SystemVerilog test suite - spawning parallel machines for testing the support for SV features in various tools which are included in the suite.

- F4PGA examples - running massive tests of the F4PGA toolchain for many different Linux distributions and spawning up to 256 machines at once. Switching to custom GCP runners reduced test times to 1 h, even with more test cases added.

But scalability is not the only benefit of custom runners with GCP. Equally important is the flexibility GCP and custom runners offer for our CIs, which has been a major focus area of the development throughout the recent months. Read more about new features we’ve recently added to the custom runners below.

Improving integration with GCP infrastructure

Attaching an external GCP disk to each job

This feature can be used to attach a disk with e.g. some external proprietary toolchain, semi-confidential set of tools or large files that wouldn’t be feasible or even possible to store, as GitHub has a hard limit of 2 GB per file in a repo. Disks are read only and may be shared with multiple jobs concurrently.

Selecting which job will spawn a preemptible machine

Preemptible machines are Compute Engine machines which have a significantly lower per-hour cost, with a disclaimer that they can be shut down at any given moment to make room for standard machines if need be. The cost difference is quite stark, as can be seen on a GCP instance comparison page after selecting “Linux On Demand cost” and “Linux Preemptible cost” in the “Columns” dropdown.

Selecting machine type per-job

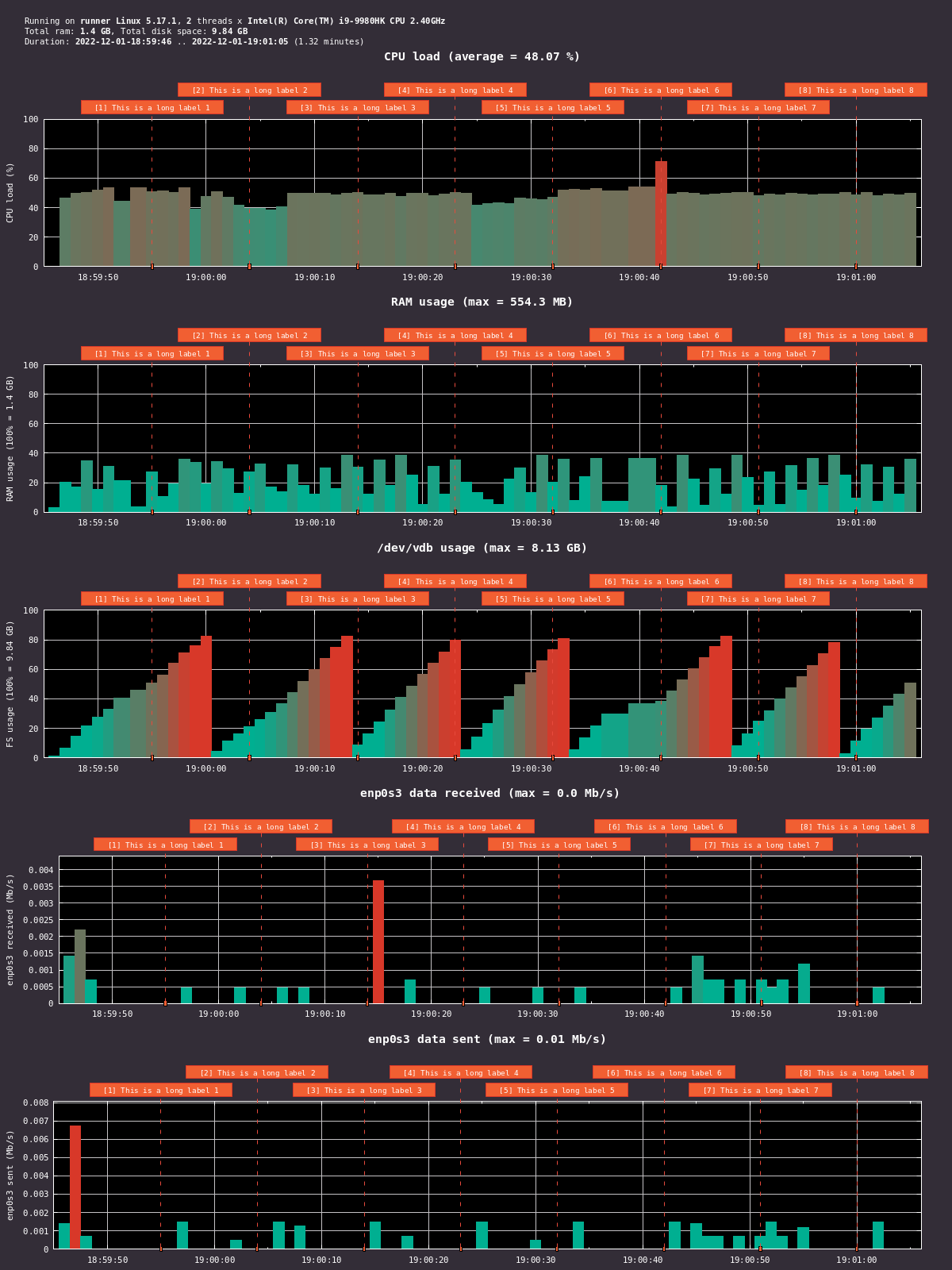

The maintainers of the Runner deployments create an allowlist of machines which are permitted to use within the repository (this prevents malicious actors from incurring high costs by selecting bulky machines in Pull Requests). Per-job granulation can be a global workflow setting and Antmicro’s open source Sargraph tool can be used to determine whether more or less compute power needs to be allocated.

Attaching a service-account

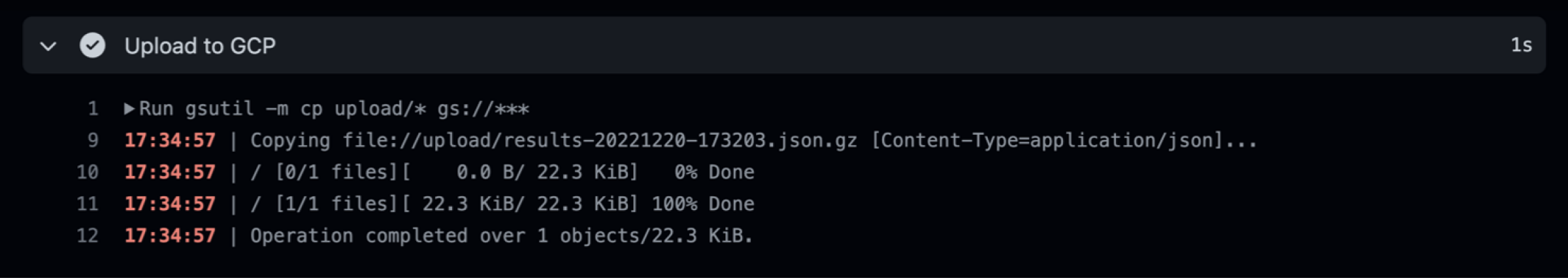

This feature makes it possible to use virtually all of GCP’s product offering at a workflow level. The service-account requires specific access but attaching it doesn’t mean giving it access to everything in your GCP project. Some use cases include:

- interfacing with Google Cloud Storage (e.g. pushing files, downloading them, checking metadata, etc),

- using BigQuery,

- pushing containers to the Container Registry.

Creating an ssh-tunnel

All virtual machines managed by the runner run within a separate VPC network where strict firewall rules are enforced, thus preventing worker machines from accessing other machines within a GCP project. Tunneling is implicit (meaning that it is a feature of the coordinator software), which makes things more secure. This feature can be used, e.g., to securely access a server that provides a proprietary tool license.

Custom GitHub runners in practice

All the new features mentioned above have already been deployed in various open source projects. Using custom machines allowed us to reduce the duration of some SV test runs from 6h to 2h, and are helping in the development of Verible, VtR, Renode and more. External storage enables integration with proprietary tools, needed e.g. for our F4PGA interchange work, which concerns interoperability of open and closed FPGA tooling. Since each test is run on a separate machine, they do not interfere with each other, and therefore can provide better results (e.g. in tool-perf, where we got stable results). We can also automatically spawn a daemon in the background with a process providing some sensitive data. The daemon runs in a separate container and is isolated from the main build/testing process, ensuring security of the data.

For your advanced CI needs, Antmicro can help you set up scalable, GCP based testing for your projects with custom GitHub runners tailored to your needs. For more information about the runners, integration with GCP or any of Antmicro’s other open source tools, reach out to us at contact@antmicro.com.