As you may remember from previous posts, our involvement in the automotive research platform codenamed MOPED, originally created by the Software and Systems Engineering Laboratory (SSE) of SICS, has been primarily revolving around introducing the Nvidia Tegra as its main processing unit, to better reflect the typical setup present in many modern cars.

The original conversion of MOPED as proposed by Antmicro involved an Nvidia Jetson TK1, which by enabling on-board CUDA processing in the built-in GPU already has quite amazing parallel processing capabilities as compared to other, CPU-only systems (the TK1-enabled MOPED was just demoed at the Innovation Bazaar of the Vehicle ICT Arena in Gothenburg, but we will write about that event in another note).

But there is a new kid on the block now: the TX1, and it has been obvious that as with every new generation, the new Tegra would provide a significant performance improvement over its predecessor.

We are one of the lucky few to already have had the Jetson TX1 platform for some time now, and so it was an obvious choice to run our MOPED road sign recognition software onto the Cortex-A57 + 256-core Maxwell GPU beast of a SoC.

The results are pretty impressive, but let us recoup what the MOPED is first.

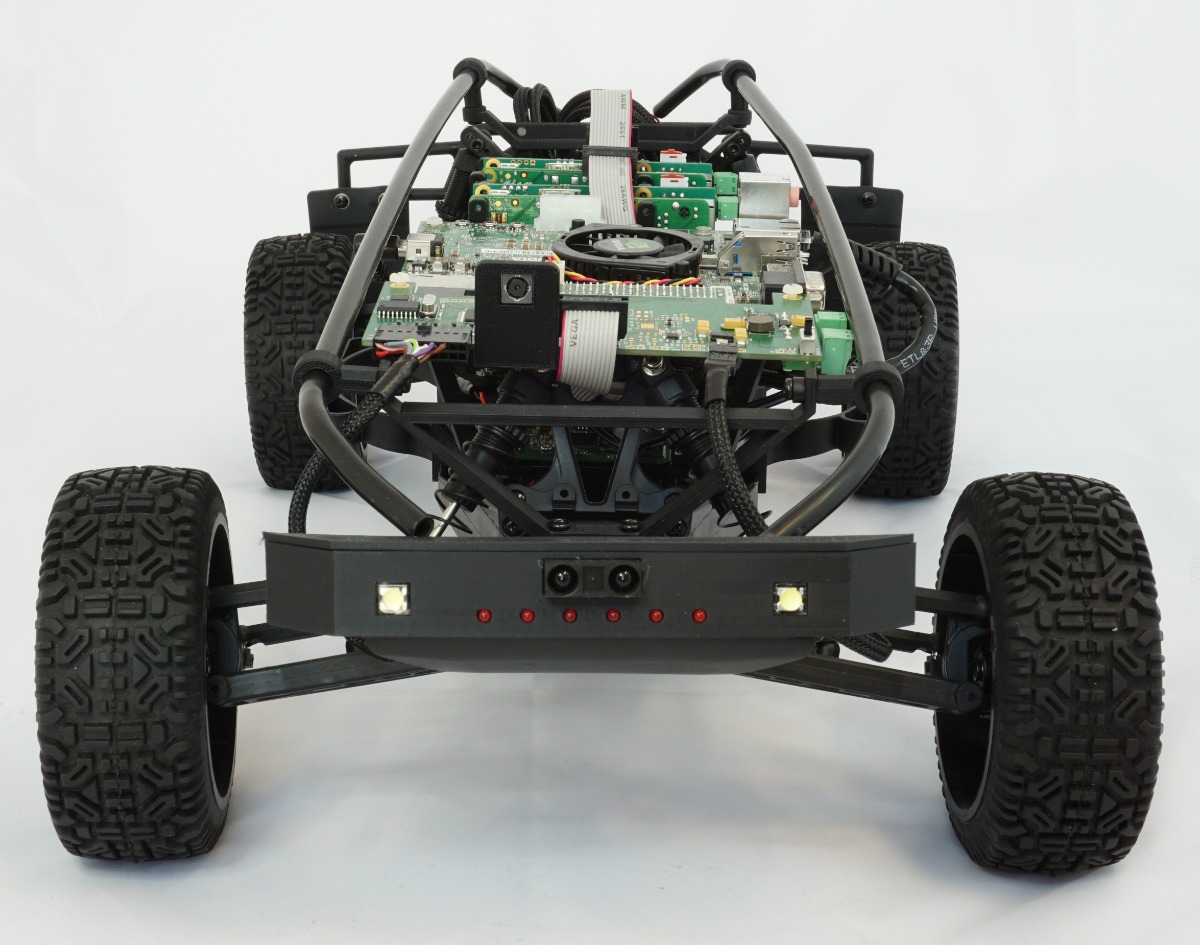

Originally built with research into automotive software architecture and the capability of post-production enhancements of the car’s capabilities (so, adding new cool features using software upgrades, Tesla style), besides the main Tegra processing board MOPED is also equipped with two Raspberry Pis running ArcCore‘s open source implementation of the AUTOSAR automotive real-time operating system standard which controls the entire on-board mechanics over the CAN bus.

While remotely controlling the car from a smartphone was possible already through the original work as performed by our friends from SICS, the Tegra upgrade allows for live video streaming, object detection and as a result, controlling the vehicle’s velocity and direction based on the information gathered.

For the comparison, we focused on road sign recognition, as it makes for a nice and contained use case. The algorithm is based on artificial neural networks that use deep learning methods for efficiency. In our case we use the Caffe framework from University of California, Berkeley as well as Nvidia’s cuDNN library, which are quite popular, fast and open frameworks for deep learning.

Architecture of the setup The application is based on the OpenCV library, which supports camera configuration and frame capture, does the road sign recognition and prepares images for h264 encoding and streaming to the paired platform (we are using Microsoft Surface for demos).

The setup includes signs of different shapes and sizes which are displayed on the external monitor. The type of sign and its position is randomized. The classification thread has to solve two problems: finding signs in the surrounding anvironment and recognizing their type. The latter is done in CUDA in cuDNN and Caffe frameworks.

Since object detection and recognition does introduce some computational complexity, performing it in real-time on embedded platforms used to be difficult – that is, before platforms like Nvidia Tegra K1 and X1 emerged, bringing down the performance-per-watt for such applications to levels acceptable for packing it into a relatively small physical footprint.

Of course, the TX1 is not exactly the smallest and most low-power SoC, but with its amazing performance it does open some new application areas in robotics and vision systems that might not have been possible before.

In our case, the performance of the CUDA-based recognition algorithm jumped by over 100% (as measured in FPS of the execution) showing that – provided you can afford the higher size, power budget and cost – the TX1 is certainly an interesting platform.

If you are interested in building your next product with the Tegra K1 or X1, reach out to us at contact@antmicro.com – we will surely find a way to help.