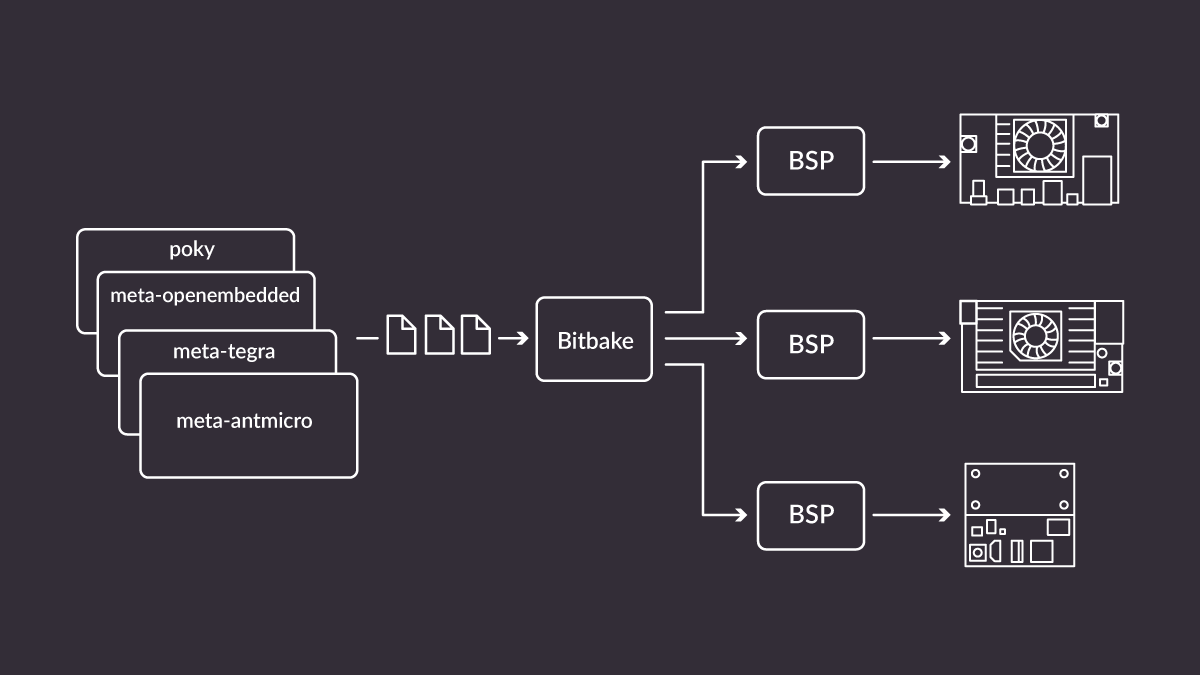

Antmicro has been working with a large number of customers implementing AI software on embedded systems, helping utilize all the advantages of an open source-based approach. To achieve this we created a complete methodology around open source tools - a lot of which are indeed of our own design - that allows us to build reproducible, production ready embedded software for customers across numerous verticals. In complex edge AI projects, this often involves developing CI systems that can build a reproducible operating system image (often called board support package, or BSP) from known, traceable components. With the right expertise from Antmicro, the result is a minimal, tailored system that contains the AI software and all its dependencies optimized against target hardware.

This approach has multiple advantages:

- Reproducibility - anyone with supported hardware can flash the image on the board and achieve the same working environment, without confusion

- Ease of use - the ideal product should just work when you turn it on. With a tailored BSP, you have full control over the boot process and the operation of your device.

- Portability - the same approach can be used to create a BSP for different hardware platforms, SoMs or architectures. We can use it to deploy any AI application, with any framework or library necessary.

Using Yocto for reproducible BSPs

To create BSPs from known sources we often use the cross-platform Yocto, embedded Linux build system. The main building block of Yocto systems is a recipe, which contains information regarding how to fetch, build and install a single piece of software. Recipes are logically separated into layers. Layers can group recipes based on introduced features, software category or hardware they support. Yocto also has mechanisms for enhancing existing recipes, e.g. to add hardware-specific compilation flags to the build, like CUDA capabilities to certain libraries. This makes Yocto a scalable, reusable and highly modular tool for building BSPs tailored for a specific task and hardware.

We use Yocto as a system builder in order to have a concise way of creating dedicated systems for a wide range of custom hardware platforms we build for our clients, often basing on our open source hardware solutions, such as Antmicro’s Jetson Nano/TX2 NX/Xavier NX baseboard or our Google Coral baseboard.

Since we find ourselves building customized operating system images with Yocto for the dedicated hardware we create, we decided to open source recipes for various tools and libraries that we add to our projects, including deep learning frameworks, runtimes and AI-based applications, as a dedicated meta-antmicro Yocto layer.

This layer includes sample recipe and configuration files for building a BSP demonstrating darknet object detection with ImGUI-based interface that can be deployed on Jetson platforms.

Yocto meta-antmicro layer overview

The meta-antmicro Yocto layer consists of several sublayers, focusing on different aspects of the system, and the target hardware.

- The

meta-antmicro-commonsublayer is target-independent. It contains basic system configuration, as well as common and useful tools, such as pyvidctrl for camera manipulation and frame analysis. - The

meta-mlsublayer is used exclusively for AI-based libraries, tools and applications - ML/DL frameworks and demo applications. As of right now themeta-mlsublayer contains a recipe for darknet, a deep learning library used for our object detection, but we are adding more libraries to this sublayer to demonstrate their performance of models on various hardware platforms. meta-jetsoncontains all recipes and recipe enhancements needed to deploy our targets on Jetson platforms. This sublayer stores various pieces of information, including: the recipe for the core BSP image, utility scripts to optimize hardware performance or adding CUDA support for target-independent software that supports GPU computing.

In meta-antmicro, we also have system-releases. It is not a sublayer, but rather it provides information and configuration files regarding building a specific BSP - a git-repo manifest files for fetching all necessary layers and distributing files to appropriate locations, as well as Yocto configuration files for particular builds will be stored in this subdirectory.

We have designed the meta-antmicro layer to be highly customizable and to allow for as much reuse of information as possible. We can easily add recipe enhancements for other target platforms, alongside Jetson, to adapt software build process for our particular use cases. Such an approach will allow us to target and easily enhance building the optimized software for various existing and incoming platforms.

We are currently working on creating a recipe for our open source Kenning framework, along with its dependencies, which will allow us to test and run tailored deep learning models for all kinds of target hardware.

Building a BSP image

All steps required to build a BSP from scratch are described in more detail in the README.md of meta-antmicro. This is a quick overview of how to create a system running the darknet visualization demo on the Jetson board.

As previously mentioned, the system-releases directory contains manifest files needed to set up the entire project. You can fetch all the necessary code with:

repo init -u https://github.com/antmicro/meta-antmicro.git -m system-releases/darknet-edgeai-demo/manifest.xml

repo sync -j`nproc`To start building the BSP, run the following commands:

source sources/poky/oe-init-build-env

PARALLEL_MAKE="-j $(nproc)" BB_NUMBER_THREADS="$(nproc)" MACHINE="jetson-agx-xavier-devkit" bitbake darknet-edgeai-demoWhen the build process is complete, the resulting image will be stored in build/tmp/deploy/images/jetson-agx-xavier-devkit. We can unpack the tegraflash package, and then flash the board by putting the hardware in recovery mode and running the following command:

sudo ./doflash.shAfter a successful flashing, the board should boot up and a darknet visualization demo should start automatically.

These kinds of automated builds can of course be performed by a server via a cloud CI system which we often deploy for our customers. This way we have complete control over what is being built and deployed on the custom devices we create and allows us to add robust OTA update capabilities, AI pipelines and remote management solutions for entire device fleets.

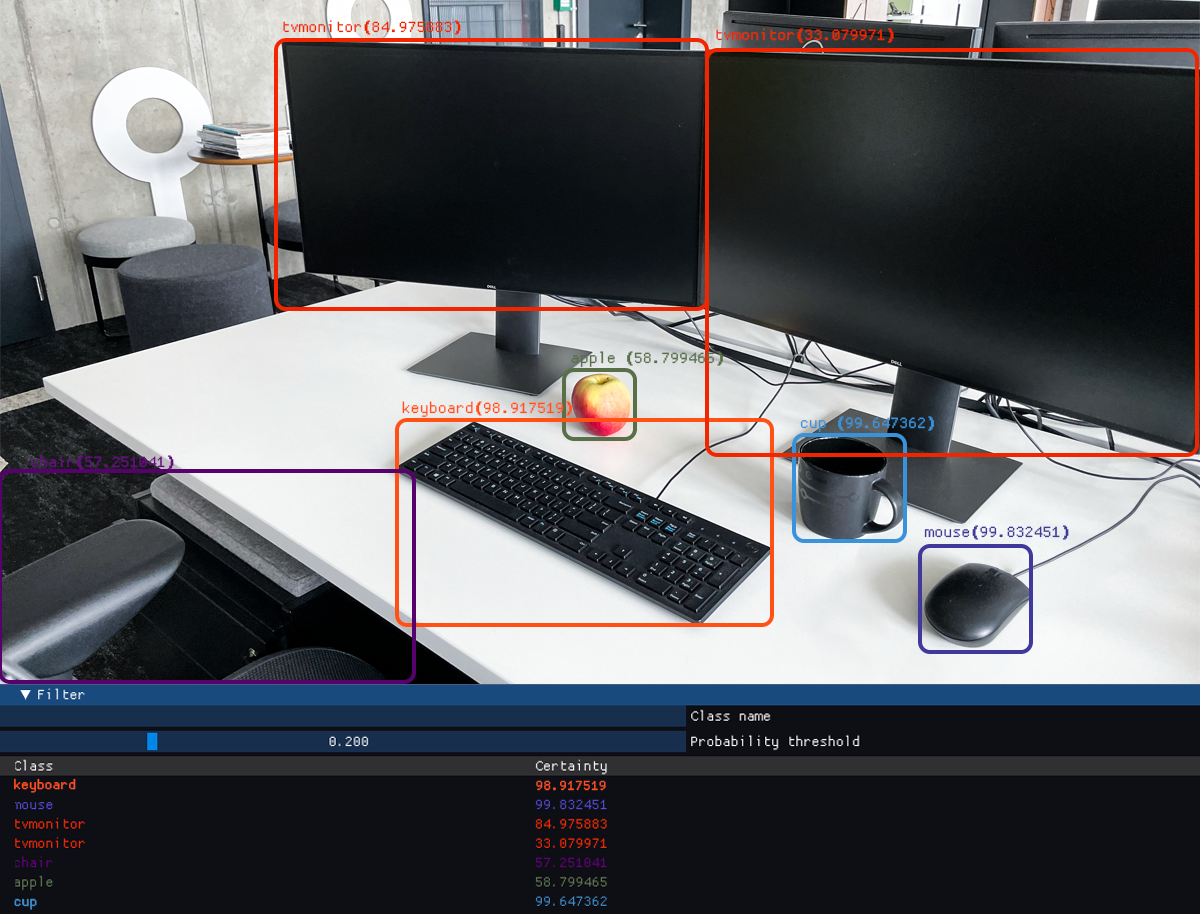

Object detection demo with darknet and ImGui

To showcase a simple Yocto-based system that can be a good starting point for development, we have created an AI demo application for object detection. YOLOv4 is used as an inference model, and is implemented in the darknet framework. Inference is run in a separate thread, which allows for smooth display from a video or camera, while objects are being detected.

Visualization is implemented using Dear ImGui library based on OpenG, which allows for GPU-based acceleration to achieve a high rendering framerate with very good quality. Dear ImGui also provides a wide array of highly customizable widgets. Our application displays bounding boxes for detected objects, along with their classes. It also has a widget allowing the user to filter certain bounding boxes based on class name and bounding box prediction score.

Details regarding the demo can be found in the project’s README.md.

Reproducible and portable edge AI systems

Creating reproducible AI solutions is a daily part of our work. Implementing CIs that can produce consistent and open BSPs from scratch allows us to create fully reproducible systems that are portable across a vast range of hardware platforms that we provide engineering services for. Antmicro specializes in building open source, explainable AI developed on ARM and RISC-V architectures, which would not be possible without the portability offered by our open source approach. This flexibility allows us to easily adapt our AI projects onto newest, cutting edge platforms, such as the upcoming Jetson Orin. If you are looking to build your next-gen product with this or similar platform, get in touch with us at contact@antmicro.com and we will be happy to show you how to make all the hardware and software building blocks fit together to create a solid embedded solution.